CHRIS WEST,

DIRECTOR OF SPORTS SCIENCE

After spending 14 years as Associate Head Coach of Sport Performance at the University of Connecticut, Chris West was given a new title in 2014: Director of Sport Science.

West is a prominent thought leader in the sports science community and is in charge of implementing sports science at one of the, across-the-board, most successful universities in the country.

Interview with Chris West

WHAT MADE YOU CHOOSE COACHMEPLUS TO GATHER AND VISUALIZE YOUR DATA?

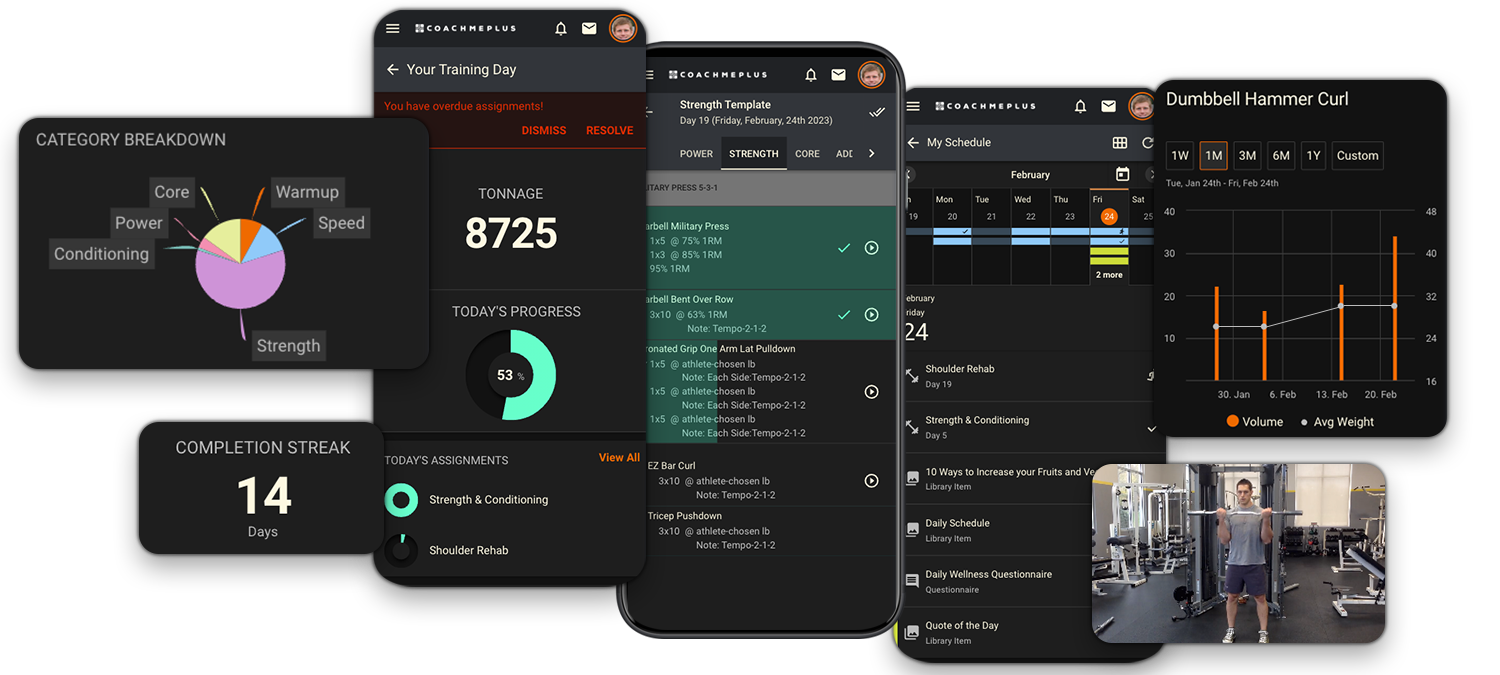

The innate problem is: If you have seven different types of measures and you have seven different types of software, it makes things a little bit complex. CoachMePlus lets us manage all our athletes on one central platform.

“The beauty of CoachMePlus is that it can integrate all these technologies, all these measurements like testing, training reporting and different imports into one common database so that you’re not switching between multiple programs.”

My interest was trying to find a system that could do this for us. CoachMePlus gave us a simple option. We could get our information in and we could visualize it. One of the biggest things, too, is that the support is outstanding. They are always there helping to make things better. It isn’t like they give you the system and say, “Good luck!” I’m on the phone with them like once a week, whether it’s a two minute question or 20 minute conversation.

“You ask how I found CoachMePlus, well, I was looking for a software piece that could help us with data management from multiple sources and give us a clean display and this was the one that I found to be the most useful for us.”

HOW DO YOU TEACH YOUR COACHING STAFF TO USE COACHMEPLUS?

This is definitely a process, yes. It is going to develop as we go along. The more I go to them and say, “Here is what I’m seeing,” whether it is a stress or fatigue trend or soreness trend or training load pieces, if I do that enough, they will be able to jump on any time and say, “I get this now.”

“I tend to think that just showing someone a chart doesn’t mean they will understand what it means.”

One of the big things that I try to do is: Rather than say, “Go on, check it out,” I will go over it with them and point to things we are seeing and show them the value of what I’m trying to get out of this graphic on the dashboard or whatever it may be. The more I do that, the more they can go through on their own and make decisions based on some of that information.

HOW DO YOU GO FROM COLLECTION OF DATA TO DECISION MAKING?

“The beauty of CoachMePlus is that we can import information, whether it be from GPS or heart rate system or both, we can see what those trends are over time.”

For one day’s session, we will have a number for that or point on the graph, but how do we tell whether that was too much or not enough or just right? That’s why it is big to visualize. For example, we can see that today was 20% more than yesterday or this week was 15% less than last week. And we can ask: Is that a trend we are comfortable with seeing? Data doesn’t paint a good picture, you need to turn the data into information as far as training load goes.

Over the course of time, you develop knowledge on what that information means. The wisdom piece is that coaching piece. We can ask, “Do you like the trend that you’re seeing?” and “What do you want to do about it?” What I’m getting at is that this system allows us to make better, more informed decisions on what we’re doing from a training standpoint.

DO YOUR ATHLETES UNDERSTAND THE VALUE OF THE DATA YOU TRACK?

It’s important to give feedback. When you’re asking a player to wear a heart rate monitor and they aren’t getting any feedback on it, there isn’t really much investment from the player. Use the soccer team for example. We have 15 players who get in the game and a roster of 25 to 30 players. The 15 players that don’t play have to make up some of that fitness so they are ready when called upon. We looked at high intensity distance covered during practice and we set a minimum of how much they needed to cover.

At the end of a session, I had a report from them that showed which guys had done enough and which needed to do, say, more sprints or something like that. It gives them the idea that we are trying to get to a certain level and it gives them feedback. It also gives them motivation. If you get it done in training, you aren’t going to have to do it after training. Also players being able to go on their iPhone and immediately see what they did during practice, which is nice. They are also competitors. So they go in and look at it and say, “I’m 17th in high intensity distance, who is No. 1?” There is motivation through the technology.

HOW DO YOU MANAGE OVER 400 STUDENT ATHLETES ACROSS 22 TEAMS?

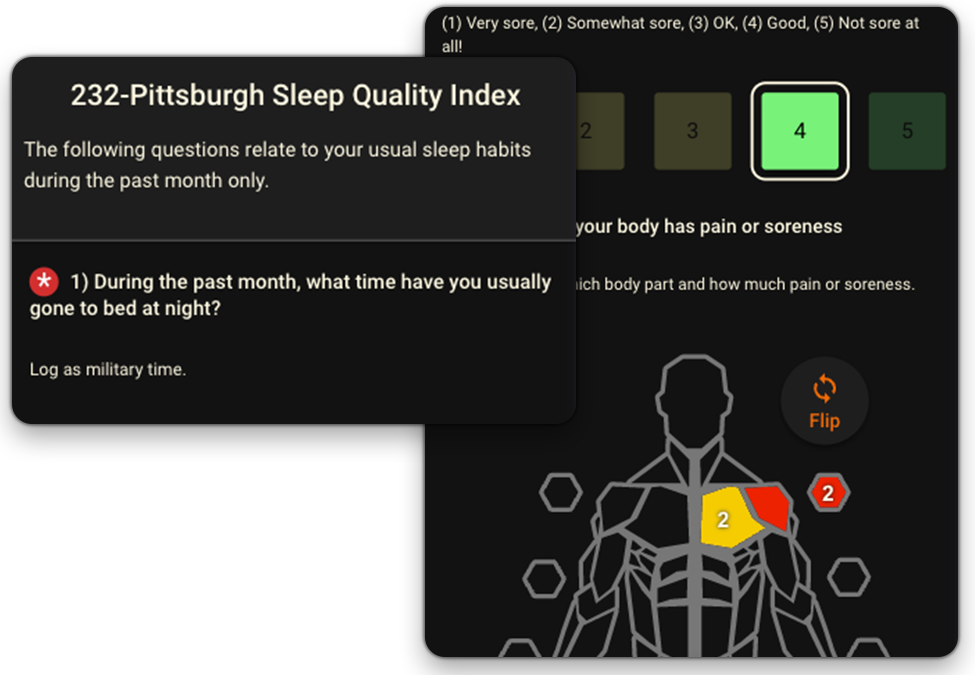

This is something we’re continuing to work on. One of the things I’m finding out from a testing standpoint is that we have tests across teams and sports that are relatively consistent. We also have tests that individual sports do that others may not do. The big thing that I’m working on is: We want to keep things consistent. For example, some of the questionnaire pieces in there. Each coach can come up with their own questionnaire. We could have 22 different teams with 22 different questionnaires.

“I think there is value in flexibility, but there also needs to be consistency.”

Everyone may have a question about stress or sleep, so we should have some standard templates across all sports. The more information we get, the more data we have and we can get more meaning out of that data because you have a larger number of data pieces to make sense out of.

We need a basic screen and assessment. It’s like when you go to the airport. You go through the screener and either they say you are good to go and you move on or you’re not good and we have to take a look and why you’re not good. We don’t have time to do a ton of tests – think about if they did every available test at airport security, the line would go on forever. The idea is: We want a test that is a basic screen that gives us a baseline. The vertical jump is very simple to administer and very quick and we can see if we need a further analysis.

The vertical jump test has been a very good indicator of something that we had trouble measuring across sports before. Take the women’s rowing team for example. They may not have the highest vertical jumps, but that doesn’t really matter, what matters is that the vertical jump indicates readiness. If it is a 20 on one day and then it is an 18, then there is an issue and we need to dig deeper into why that issue happening.

HOW DO YOU DECIDE WHICH SPORTS TECHNOLOGY TO ADOPT?

There are some fundamental things that I need to know. Whether we want to do a strength test or power test or whatever, what we want to be able to do is look at trends over time. Are we maintaining qualities that we want? The issue with different technologies is that, there are some things that are pretty cool, but we have to ask how they are actually useful for us. From a training standpoint, we also want to know:

Are we doing enough?

Are we doing too much?

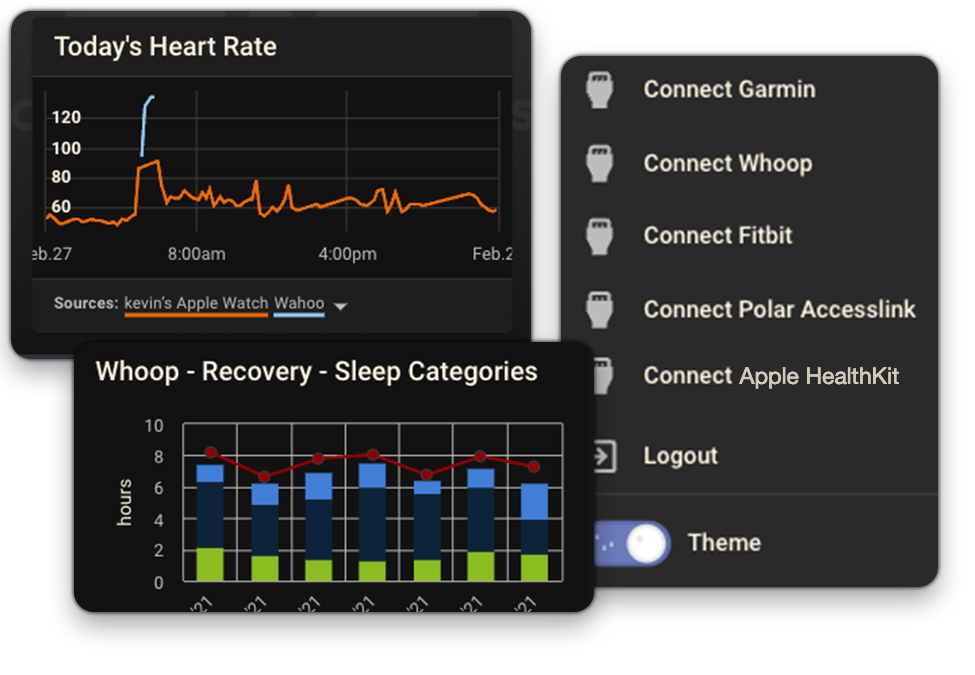

For example, we want something that is going to give us meaningful information on training load. Well, we can do that through heart rate monitoring and questionnaires that look at internal load. CoachMePlus integrates with over 50 sports tech devices.

“The game-changer now is in GPS and accelerometer data that actually gives you the amount of work that the athlete has done.”

If we can know where they are in terms of load by doing our testing, we can prescribe a certain amount of training load that we can measure and quantify then look at what affect that load has on readiness. One of the things we use is the vertical jump test. We found that there is a connection between that jump and how much distance players covered in a match.

See, we can capture the training load and to capture the effect of that training load. Anyway, when we are looking at any technology piece, we are asking how it helps us measure either the training load or how that load effects readiness, not just whether the technology is cool.